Kubernetes Pod and Pod has revolutionized the way we manage containerized applications, and at its core lies a powerful building block: the pod. If you’re diving into Kubernetes, understanding pods is essential. But have you heard about multi-container pods? These innovative structures allow for complex applications to run seamlessly by housing multiple containers that work together as one cohesive unit.

Imagine deploying a web server alongside an application that manages data storage, all within a single pod. This setup not only simplifies communication between containers but also optimizes resource utilization. As organizations increasingly adopt microservices architectures, mastering multi-container pods becomes crucial for developers and DevOps teams alike.

In this guide, we’ll explore what makes a Kubernetes pod tick, delve into the benefits of using multi-container setups, outline different types of configurations available to users, and share some best practices for effective management. Ready to unlock the full potential of your Kubernetes environment? Let’s get started!

What is a Kubernetes Pod and Pod?

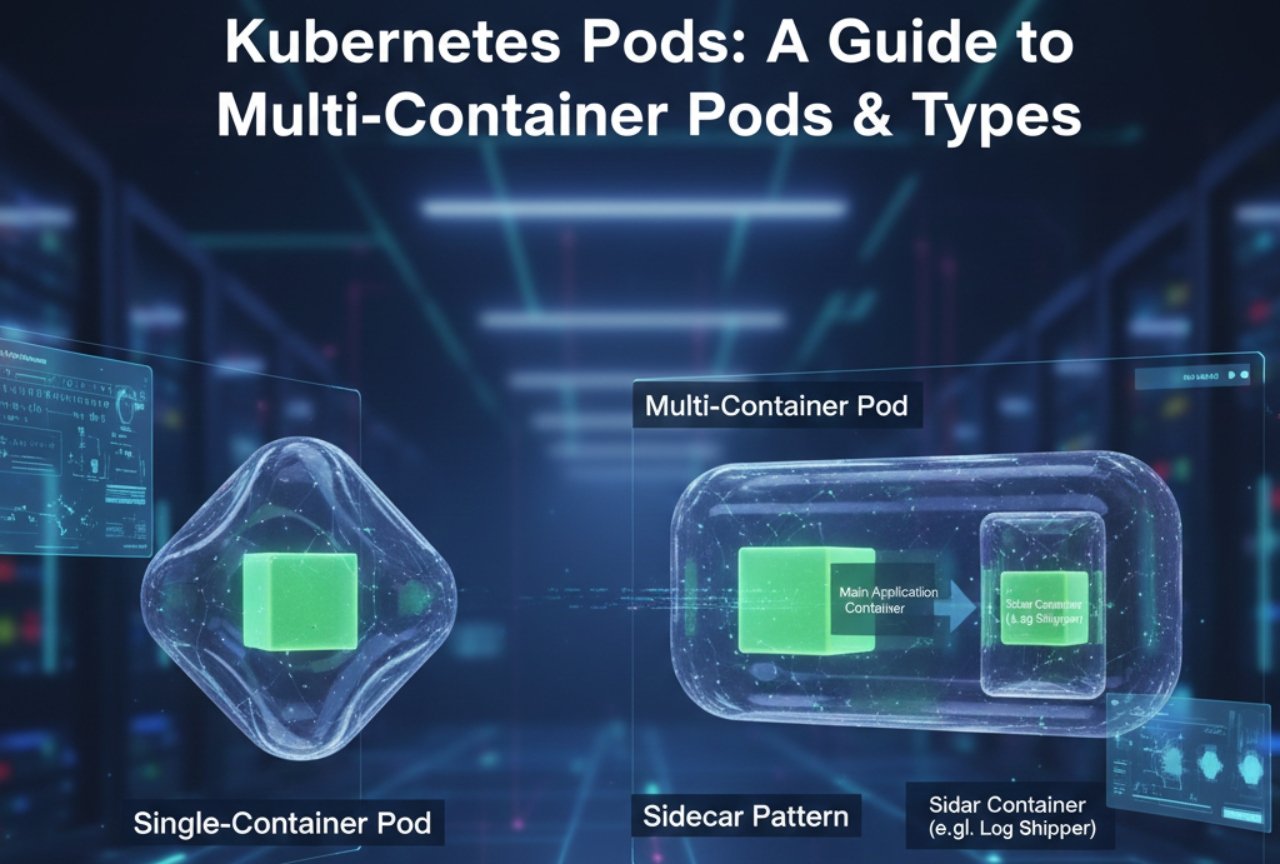

A Kubernetes pod is the smallest deployable unit in the Kubernetes architecture. It represents a logical host for one or more containers that share storage, networking, and specifications on how to run these containers.

Each pod operates within its own environment, which allows it to manage multiple applications together. This close-knit setup enables rapid communication between containers since they exist in the same network namespace.

Pods are transient by nature. They can be created and destroyed dynamically depending on demand. When a pod is terminated, Kubernetes ensures that new instances are spun up as needed.

In addition to container management, pods support essential functions like logging and monitoring seamlessly across all contained applications. This holistic approach simplifies deployment strategies while enhancing resource efficiency within your cloud-native ecosystem.

Why Use Multi-Container Pods?

Multi-container pods offer a powerful way to enhance application functionality. By grouping related containers together, they can share resources and communicate efficiently within the same network namespace.

This close proximity reduces latency between services. For instance, a web server container can interact swiftly with a database container without the overhead of external calls.

Resource sharing also maximizes efficiency. Containers within the same pod can leverage shared storage volumes for data persistence, simplifying management and reducing redundancy.

Scalability becomes easier too. You can scale all containers in a pod together based on demand rather than managing each one separately. This unified scaling leads to better resource utilization across your cluster.

Using multi-container pods fosters modularity as well. It allows developers to build microservices that are tightly coupled yet independently deployable, enhancing agility in software development processes.

The Different Types of Multi-Container Pods

Multi-container pods in Kubernetes come with various configurations, each serving specific needs.

The most common types are sidecar, ambassador, and adapter containers. Sidecar containers enhance the functionality of the primary application by managing tasks such as logging or monitoring without altering the main codebase.

Ambassador containers act as proxies for the primary container. They facilitate communication between different services or even external applications while maintaining a consistent interface.

Adapter containers serve to transform data formats between disparate systems within a pod. This setup is crucial when integrating legacy systems with modern applications.

Each type plays a unique role and can significantly impact how microservices interact within your architecture. Understanding these distinctions helps you choose the right multi-container strategy for your deployment needs.

How to Create and Manage Multi-Container Pods

Creating and managing multi-container pods in Kubernetes involves several straightforward steps. Begin by defining your pod specification in a YAML file, where you can outline each container’s details.

Use the `kubectl apply -f your-pod-file.yaml` command to deploy the pod. This simple command will create all specified containers simultaneously within the same network namespace.

Once deployed, monitor your containers using `kubectl get pods`. This allows you to track their status and ensure they’re running smoothly.

For updates or changes, modify the YAML file as needed and reapply it with the same command. Kubernetes handles rolling updates for seamless transitions without downtime.

Don’t forget to implement proper logging and monitoring tools for better visibility into how each container is performing within its pod environment.

Best Practices for Managing Multi-Container Pods

Managing multi-container pods requires careful attention to detail. Start by defining clear communication between containers. Use shared volumes for efficient data exchange and to minimize redundancy.

Monitoring is essential. Utilize tools like Prometheus or Grafana to keep track of container metrics. This helps in identifying bottlenecks before they become issues.

Resource allocation must be precise. Set CPU and memory limits appropriately for each container within the pod. This ensures optimal performance without wasteful resource consumption.

Keep your configurations organized using ConfigMaps and Secrets. Store sensitive information securely, making it easier to manage environment-specific settings without hardcoding them into applications.

Implement health checks for all containers within the pod. Liveness and readiness probes ensure that only healthy containers handle traffic, enhancing overall application reliability while minimizing downtime.

Real World Examples of Successful Multi-Container Pod Implementations

Companies like Spotify utilize multi-container pods to streamline their microservices architecture. By housing related services together, they reduce latency and enhance communication efficiency. This setup allows for seamless updates without affecting the entire application.

Another example is Airbnb, which employs multi-container pods for its recommendation engine. Each pod runs different components of the service but shares resources effectively. This leads to improved performance during peak usage times.

Netflix also benefits from this approach by managing its vast content delivery network through multi-container pods. These facilitate easy scaling and better resource allocation as demand fluctuates.

GitLab uses multi-container pods in its CI/CD pipeline, enabling developers to run tests across varied environments simultaneously. This boosts productivity while maintaining consistency throughout development stages.

Conclusion

Kubernetes is transforming the way we deploy and manage applications. Understanding the concept of Pods, especially multi-container Pods, is crucial for any developer or operations team looking to harness this power.

Multi-container Pods offer unique advantages. They enable better resource sharing and can simplify application deployment by keeping related components close together. Moreover, they enhance communication between containers since they share the same network namespace.

Diving into the various types of multi-container Pods helps teams choose the right architecture for their specific needs. Whether it’s sidecar patterns that extend functionality or ambassador patterns that handle service interactions, each type serves a distinct purpose in an application’s lifecycle.

Creating and managing these pods requires knowledge of Kubernetes commands and configurations. It’s essential to monitor performance and troubleshoot effectively as your deployments scale.

Best practices help maintain efficiency while minimizing complexity when working with multi-container Pods. Emphasizing modular design, leveraging health checks, and implementing proper logging are key strategies that lead to smoother operations.

Real-world implementations showcase how companies leverage multi-container architecture to solve complex problems efficiently. From handling microservices dependencies to optimizing resource use across cloud environments, success stories abound in adopting this model.

The landscape of container orchestration continues evolving rapidly. As Kubernetes matures, so will its capabilities around pod management – making continuous learning essential for anyone involved in cloud-native development or IT operations.